During the learning process, however, different actions might have the same value of Q. In this example where the matrix Q has converged to its optimal value, it defines a stationary policy: each row contains one action with a unique maximum value. The consecutive states are represented by (0,0), (1,0), (1,2), and (2,2), respectively. Solution of the Tower of Hanoi game with 2 disks. In this example, by starting at state (0,0) and sucessively choosing the move with the highest value of Q, the reader can convince himself that the Q matrix leads the agent through the sequence of states (0,0) - (1,0) - (1,2) - (2,2), which corresponds to the 3-move optimum solution of the 2-disk Tower of Hanoi game (Figure 6).įigure 6. The values of the matrix Q are essentially weights which can be assigned to vertices of the state transition graph in Figure 3, which guide the agent from the initial state to the goal state in the shortest possible way. 'Action value' matrix Q for the Tower of Hanoi game with 2 disks, learned with a discount factor of = 0.8, learning factor = 1.0, and 1000 training episodes. The result for 2 disks is shown in Figure 5.įigure 5.

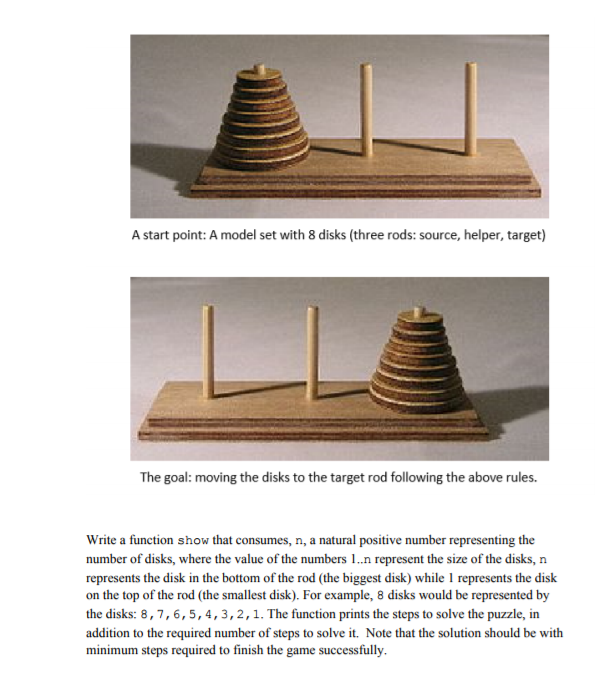

The matrix of 'values' for each action in each state is called Q and can be recursively solved for, or 'learned', in a stochastic manner as described by Watkins & Dayan. Ultimately, we seek to assign a 'value' to each possible move in each state, so that by maximizing over these values we can define a policy leading to the optimal solution. In the other states, each of the 2 or 3 possible moves has an equal reward of 0, and there is no apparent reason to choose one over the other. The reward matrix R describes only immediate rewards: a reward of 100 can only be achieved from the penultimate states (0,2) and (1,2). Moves which lead to the goal state (2,2) are assigned a reward of 100, illegal moves of, and all other moves of 0. The states are labeled by tuples as in Figures 2 and 3, with the difference that pegs are indexed 'pythonically' from 0 to 2 rather than from 1 to 3. The ( i, j)th element represents the reward for moving from state i to j. Reward matrix R for a Tower of Hanoi puzzle with N = 2 disks. For simplicity, the reward matrix is shown for N = 2 disks in Figure 4.įigure 4. The first step in the program is to generate a reward matrix R which captures the rules of the game and gives a reward for winning it. The reward matrix R and 'action value' matrix Q for 2 disks In the following section, we outline how this is achieved by Q-learning. Thus for N = 3, the puzzle can be solved in 7 moves, as shown in Figure 3.

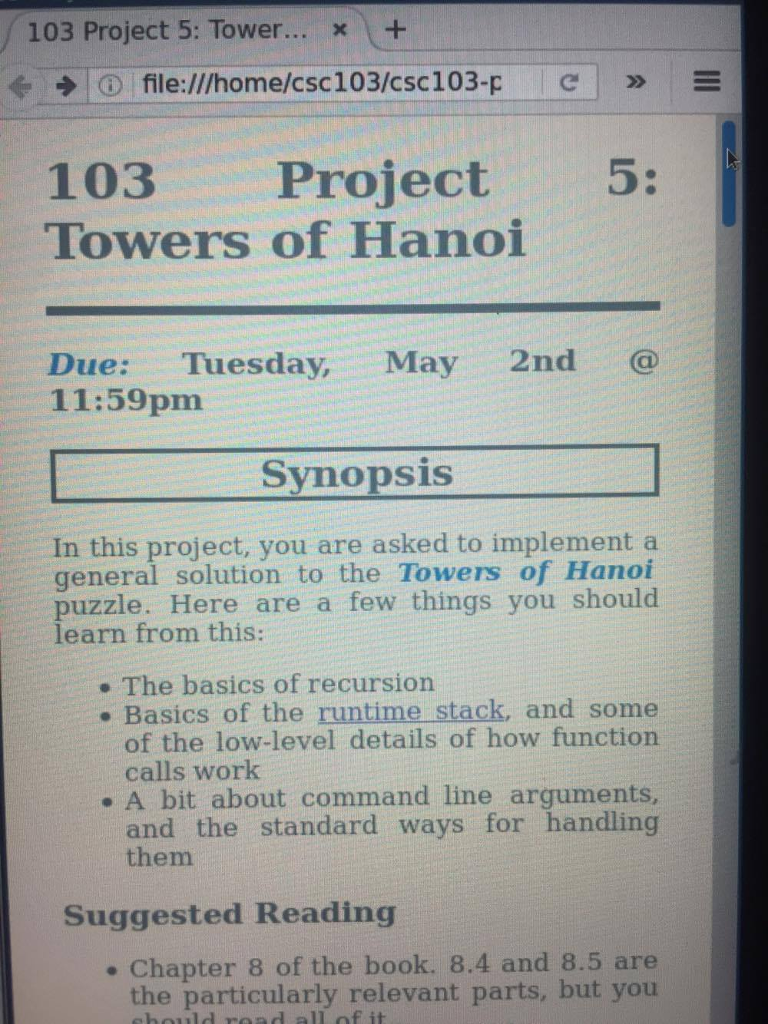

The minimum number of moves required to solve a Tower of Hanoi puzzle is 2 N - 1, where N is the number of disks. State transition graph for the three-disk Tower of Hanoi puzzle (above) and the corresponding optimal 7-move solution (below), which follows the path of the right hand side of the graph. The states of the puzzle and the transitions between them corresponding to legal moves form the puzzle's state transition graph, which is shown in Figure 3.įigure 3. Initial and goal states of the Tower of Hanoi puzzle with 3 disks. Thus, the initial state is (111), and the desired final state is (333) (Figure 2).įigure 2. The disks are labeled in order of increasing size. (Source: Wikipedia).įollowing Anderson, we represent the state of the game by tuples, where the i th element denotes which peg the i th disk is on. An animated solution of the Tower of Hanoi puzzle for 4 disks. Figure 1 shows the optimal solution for 4 disks.įigure 1. The only legal moves are those which take the top-most disk from one peg to another, with the restriction that a disk may never be placed upon a smaller disk. The objective of the game is to move all the disks to the third peg. The puzzle starts with all disks stacked on the first peg in ascending order, with the largest at the bottom and the smallest on top. The Tower of Hanoi game consists of three pegs and a number of disks of different sizes which can slide onto the pegs. The implementation follows the Q-learning algorithm as originally proposed by Watkins & Dayan. Here, a simpler puzzle is solved: the Tower of Hanoi game.

Chegg code hanoi towers professional#

Reinforcement learning has recently received renewed interest with the beating of a professional human Go player by Google Deepmind's AlphaGo. The script can easily be adapted to play the game with a different number of disks N, for example. In a Python environment with Numpy and Pandas installed, run the script hanoi.py to produce the three plots shown in the Results section. Solves the Tower of Hanoi puzzle by reinforcement learning.